Full Stack Multiple Canary Release

1. What is Canary Release

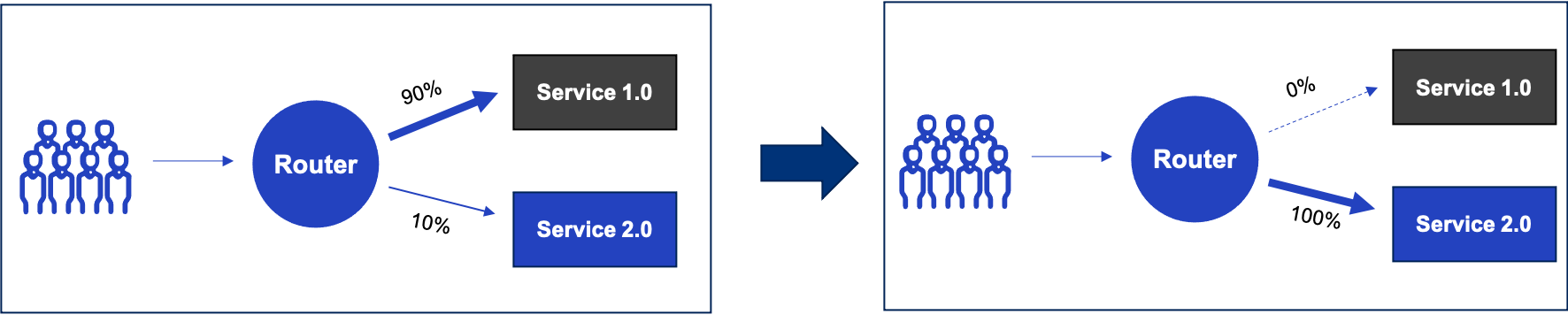

Let’s start with the simplest case. Canary is a technique used to reduce the risk associated with releasing new versions of software. The idea is to first release a new version of the software to a small number of users and then gradually iterate through the upgrade. For example, in this diagram, we test 10% of the traffic first, then gradually move more traffic to the new version, and finally, the old version is cleared and taken offline.

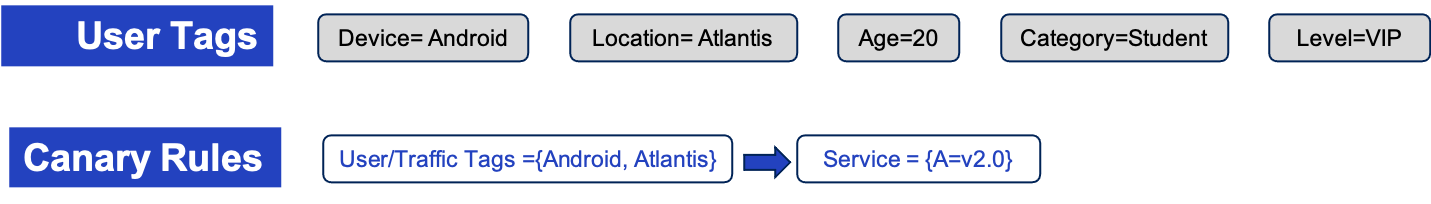

Throughout the testing process, we can label the traffic with various business tags, such as Android devices, the location of Atlantis, etc. Also note that user tags should not use IP addresses, which are inaccurate and inconsistent.

Then we can specify canary traffic rules to schedule /route a certain part of the user’s traffic to a certain Canary, for example, the Android user from Atlantis is scheduled to the 2.0 Canary version of Service A.

2. What is Full-Stack Canary Release

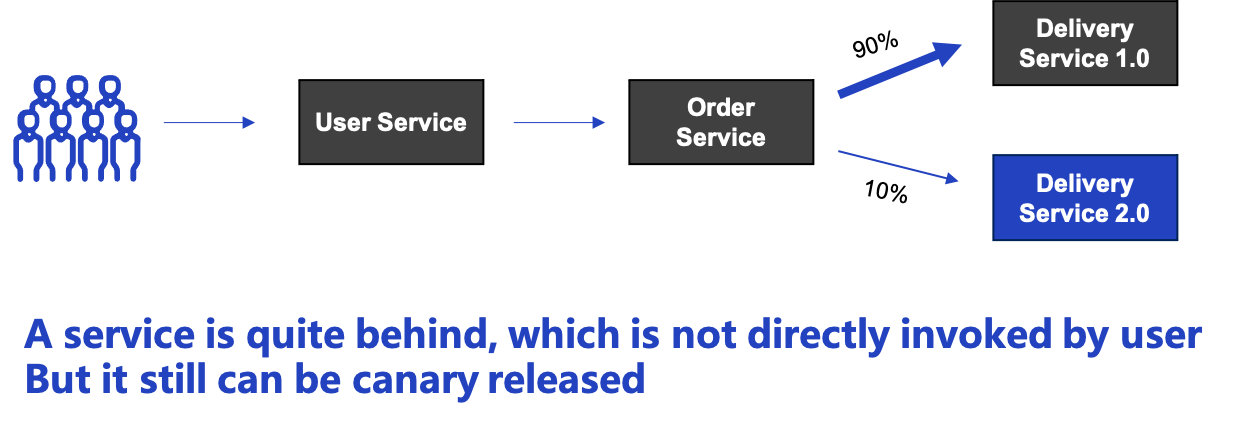

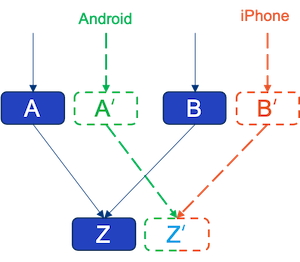

The scenario of a single service’s Canary is still limited. In reality, it is more common to have full-stack canary testing. For example, a user client cannot be forwarded directly through the router to the Canary version of the service. This is because the service is very far back in the whole chain, separated by other services.

As shown in the figure, we have published Canary for Delivery, with User and Order services spaced in between. In this case, we need to do two things to ensure that the traffic is scheduled correctly.

- The first is to pass through the user tags

- The second is to route traffic to the correct version of the next service at any endpoint of the chain.

If you consider the implementation level a little bit here, you will notice that there are two categories of approaches to do full-stack canary release; either by changing all of the service codes one by one or by a non-intrusive platform-level solution. And changing the code can be very cumbersome and verbose and prone to bugs.

3. Case Study - Canary Release in Real World

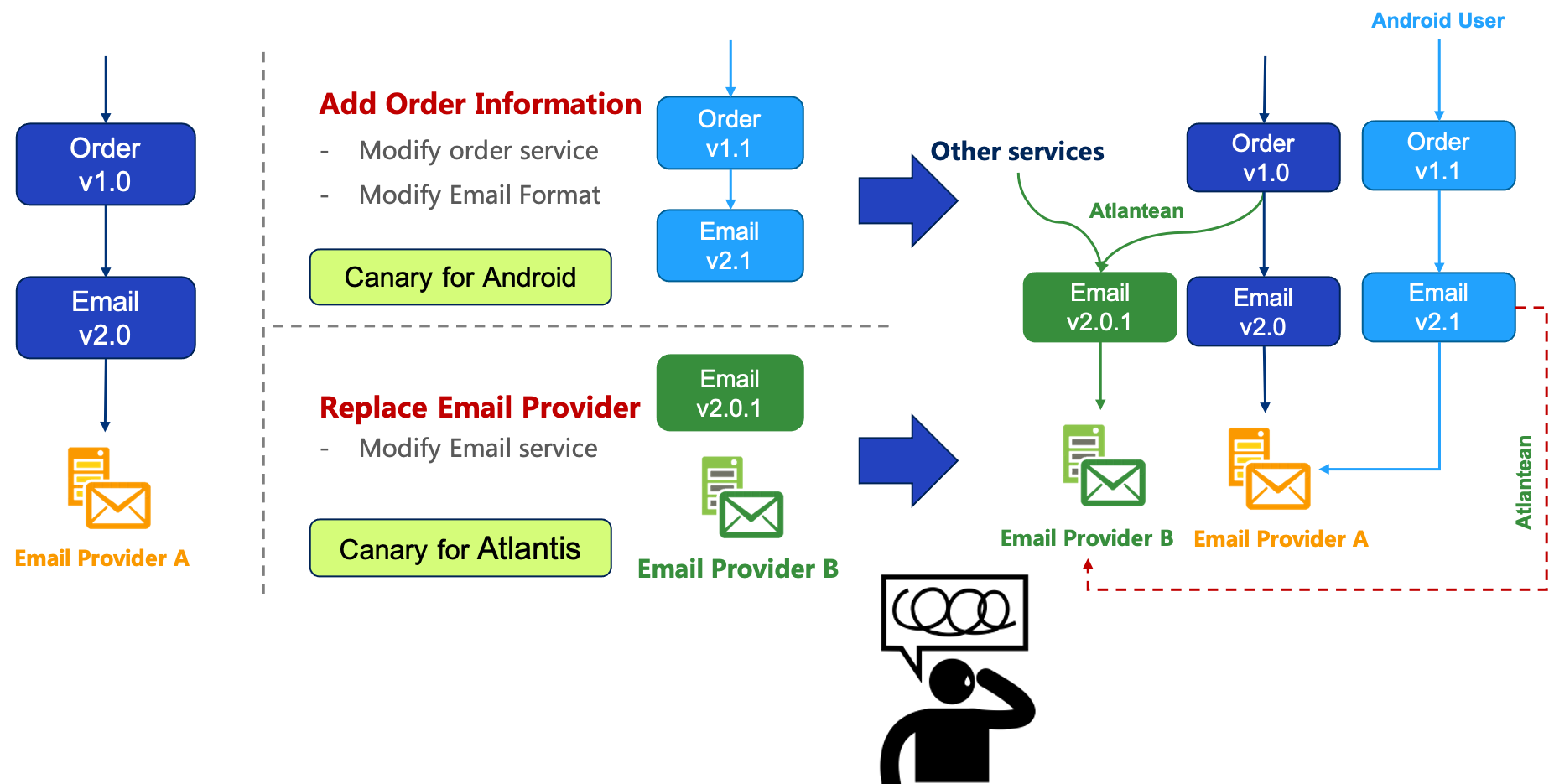

With the problem model just described, let’s use another practical example to illustrate the difficulty of multiple canary releases. For example, there are now two services Order v1.0 and Email v2.0, the service Order calls the service Email, and the service Email uses the third-party Email provider A.

And we decided to add some information to the Order entity as a test version for Android users only. Since the changes to the Order entity affect both services which need to be changed, we added Order v1.1 and Email v2.1 to apply this change.

Then another team decided to replace Email Provider A with Email Provider B in the Email service, so we added Email v2.0.1 to test users from Atlantis only.

And if both of those two canary releases go production at the same time, we would have the problem.

As shown above, the light blue canary release needs users from Android, and the green canary needs users from Atlantis. If the users from Android on the light blue side are from Atlantis, then do I also have to schedule them to the green canary? and vice versa. If we implement this logic, then we need the two canary releases to be able to see each other. This kind is very difficult to do in practice.

There is a dilemma that Android users and Atlantean users are overlapping, which is traffic from Android phones in Atlantis. Considering the complexity of the traffic and the inconsistency felt by the users, it will lead to a heavy operation burden, and the canary traffic would be messed up and confused.

4. The Challenge of Multiple Canary Release

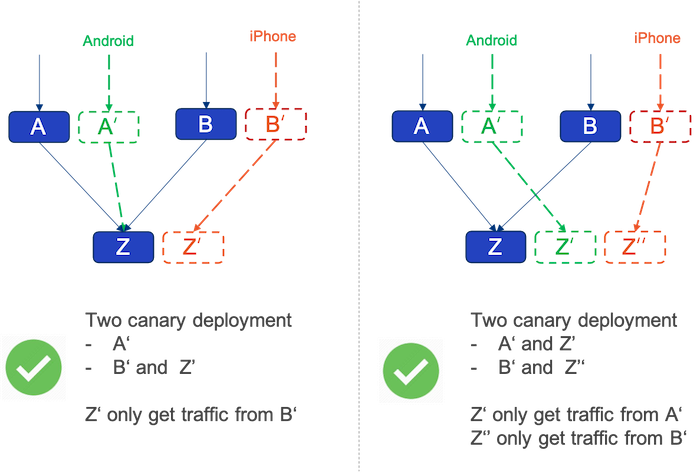

Let’s take one step back and analyze different cases of Canary release. Let’s first concentrate on the figure below.

For example, A and B rely on Z, while A' tests Android traffic and B' is testing iPhone traffic, they test two different user groups, and if they both rely on Z' to test, Z' takes on two different canary traffic and will become a source of confusion.

Then there are two better approaches, seen in the figures as below.

The left one is to schedule traffic from A' to Z, and the right one is to schedule traffic from A' and B' to Z' and Z'' separately. Thereby the principle to make many things easier is -

One canary release, one traffic rule.

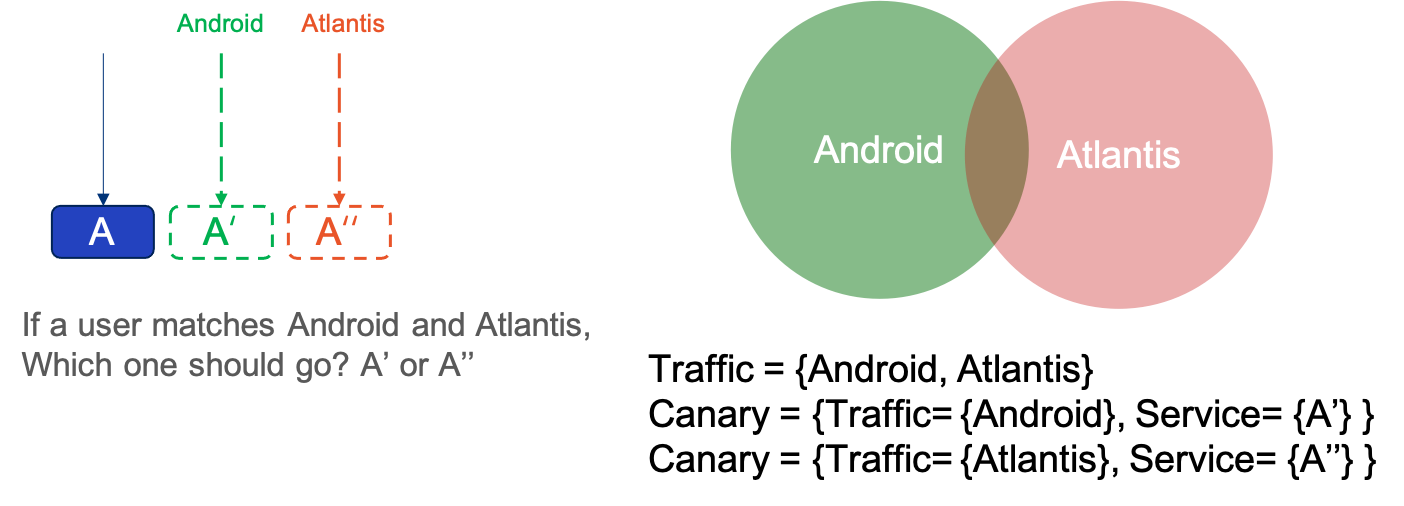

Even if the canary conflict problem is solved, there is still a problem that the traffic rules may overlap. The previous example is the user traffic of Android and iPhone, but if one Canary tests Android and the other tests Atlantis, there will be a common subset of traffic rules for both Canary releases.

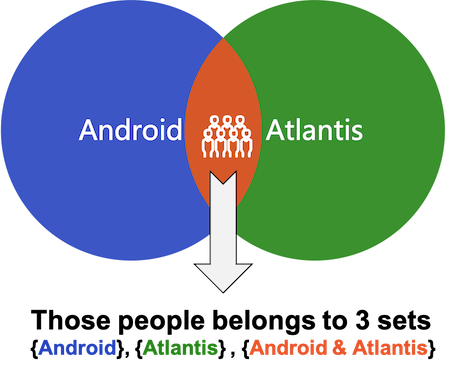

So if the traffic is from a common subset, such as traffic from Android devices in Atlantis, how should we route that traffic at this time?

This ends up being a mathematical abstraction of two set problems:

- Set matching: user traffic is a set, Canary release of traffic rules is a set.

- Multi-matching problem: multiple canary traffic rules are matched on, which one should be selected.

5. Case Study - Multiple Canary Rules Matching Problems

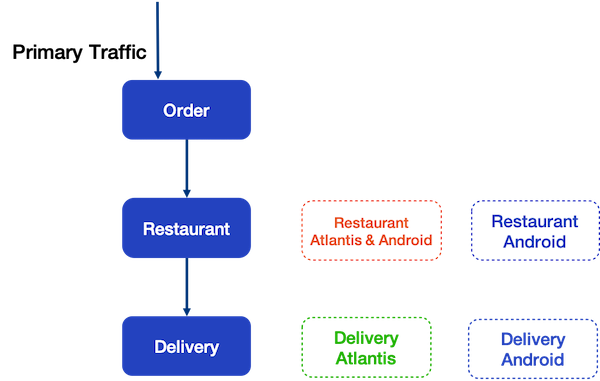

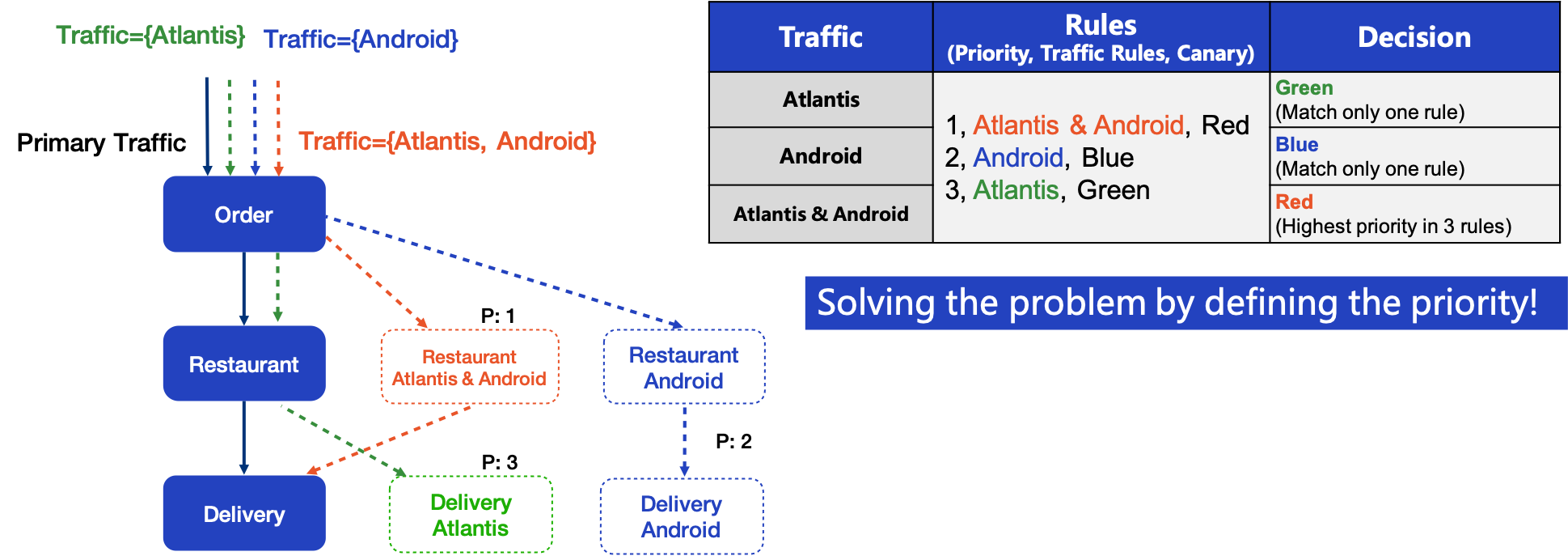

So let’s use an example to demonstrate all the problems mentioned before, for example, there is a backend service stack of a food delivery app. The service consists of three microservice: Order service, Restaurant service, and Delivery service.

- Order service has no canary

- Restaurant service has two canaries

- First Canary is for Android traffic from Atlantis

- Second Canary is for all Android traffic

- Delivery service also has two canaries

- First Canary is for traffic from Atlantis

- and the second is for Android traffic

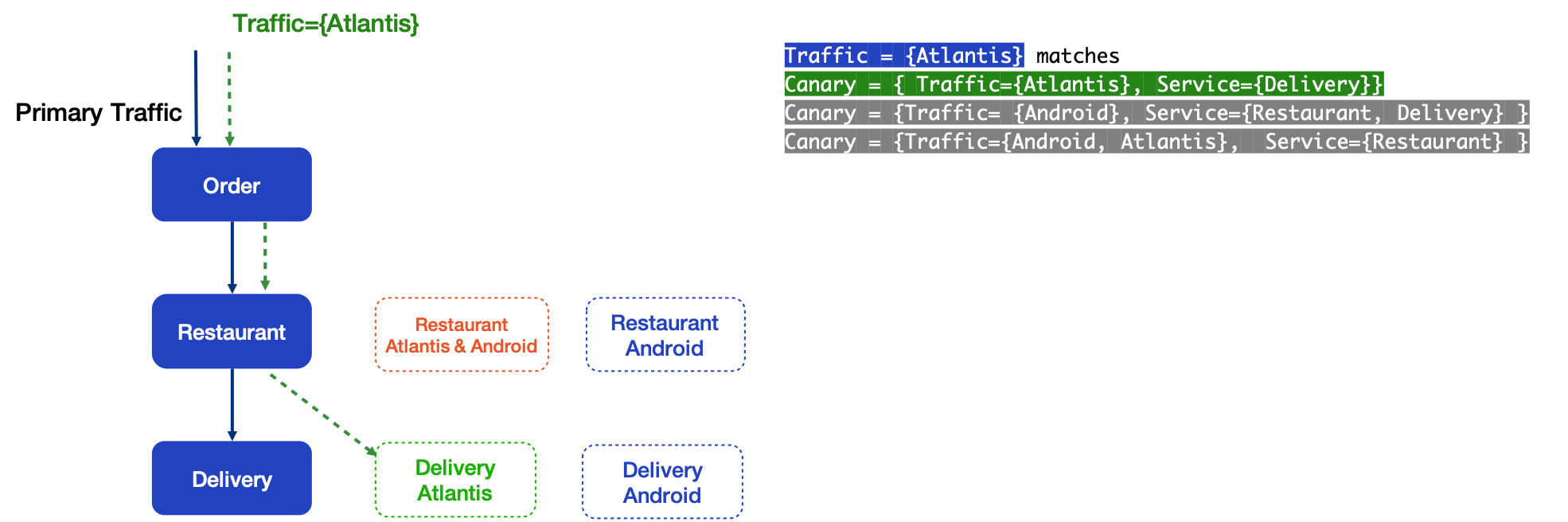

5.1 Perfect Match

When the system receives traffic with the Atlantis user tag, it matches the routing rules of Delivery services Atlantis Canary and the traffic follows the green dot line path.

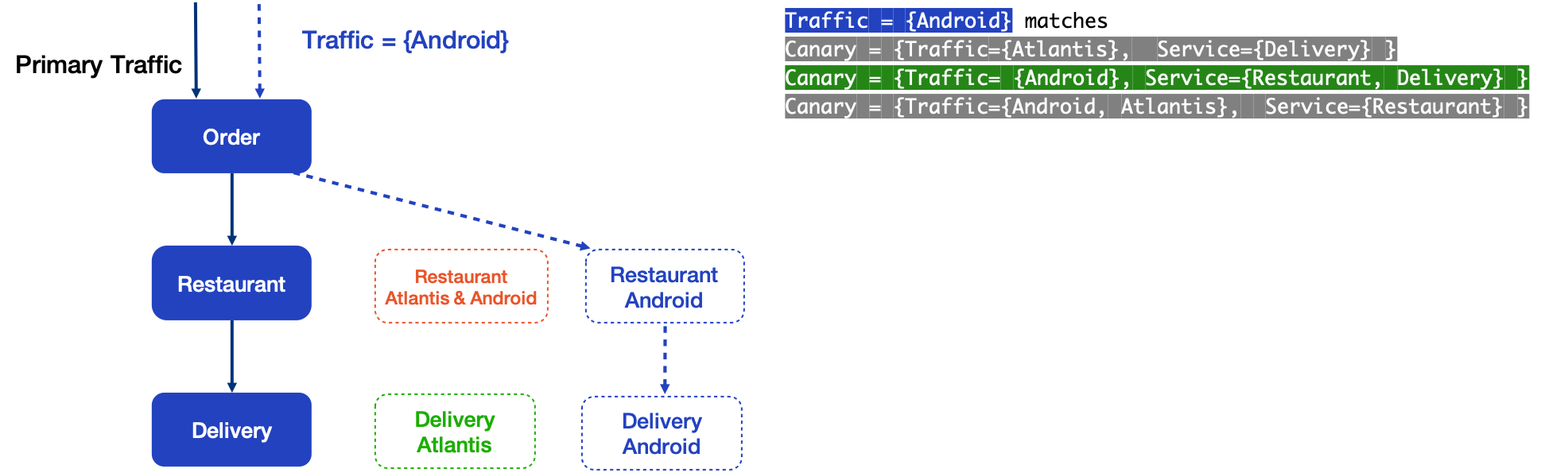

Then, on the other hand, the traffic with the Android user tag matches the routing rules of Android Canary for both Restaurant and Delivery. The traffic follows the blue dot line path.

5.2 Multiple Match

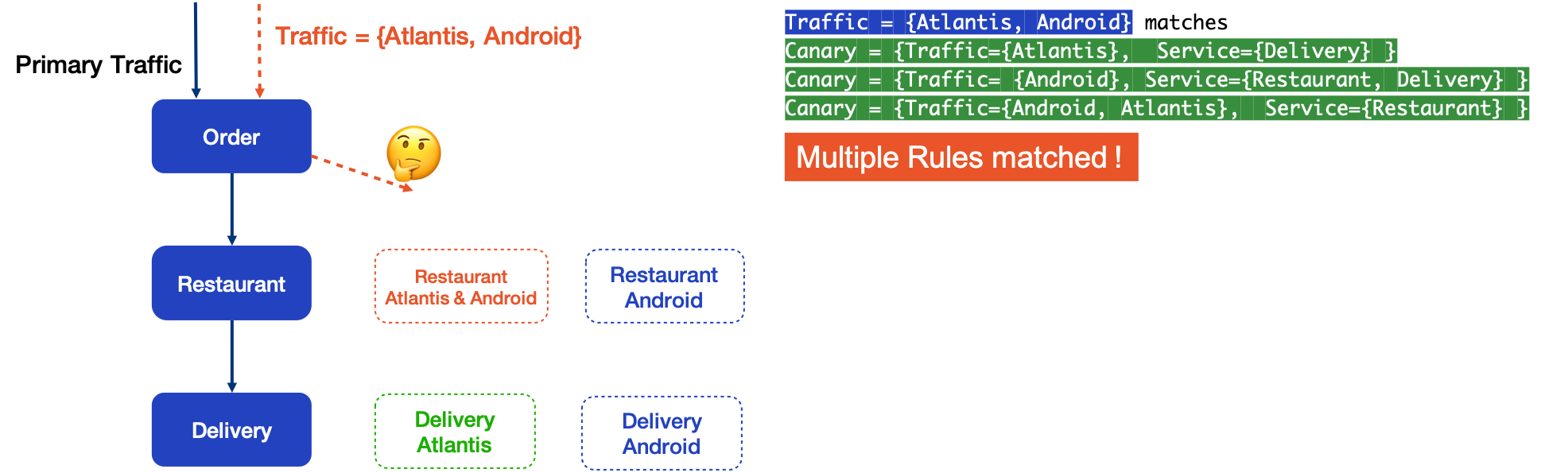

But what happens for traffic that contains both Android and Atlantis tags? It matches all three canaries and there is no unambiguous way to route the traffic.

From the view of the set, Traffic Atlantis and Traffic Android each match on green and blue Canary, while Traffic Atlantis&Android can match on all Canary.

In terms of math, the Canary rule is matched if Canary Traffic is a subset of User Traffic. So how should we handle the multiple matching problem?

The figure above shows that users who are Android and also Atlantis belong to all three collections at the same time, which is why they match all three canary rules.

5.3 Match Priority

A simple and easy way to solve this problem is to specify the priority of Canary. Each traffic rule has a number indicating the priority, from small to large. As you can see from the figure, Traffic Atlantis & Android matches three Canaries, but because Restaurant Atlantis & Android has the highest priority, priority one, the red Canary is therefore selected.

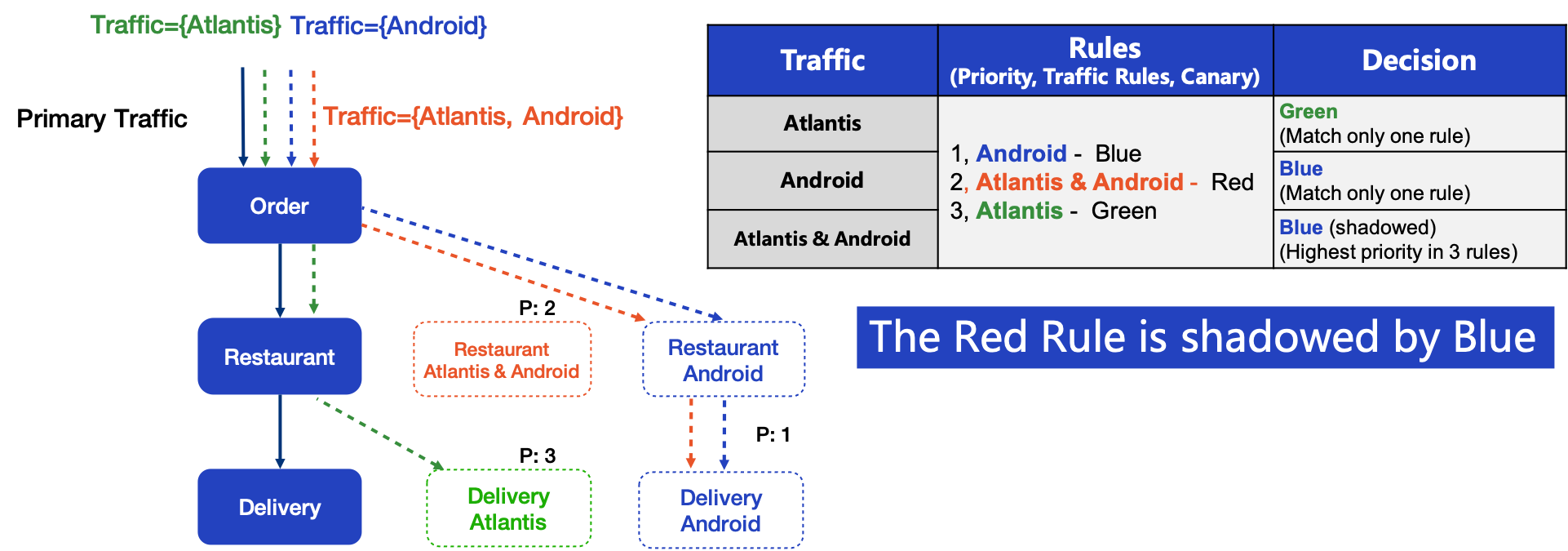

5.4 Match Shadow

Even though the priority solves the problem of multiple matching, there is still a problem of misused configuration: Traffic Shadow Problem. In this example, the Red Rule is shadowed by the Blue rule, as Blue has a higher priority. It means no traffic is routed to Atlantis & Android Canary

This is the same reasoning as exception catching in C++/Java, if the exception with the larger scope is in front, then the exception defined later will not catch any exception.

6. Technical Implementation

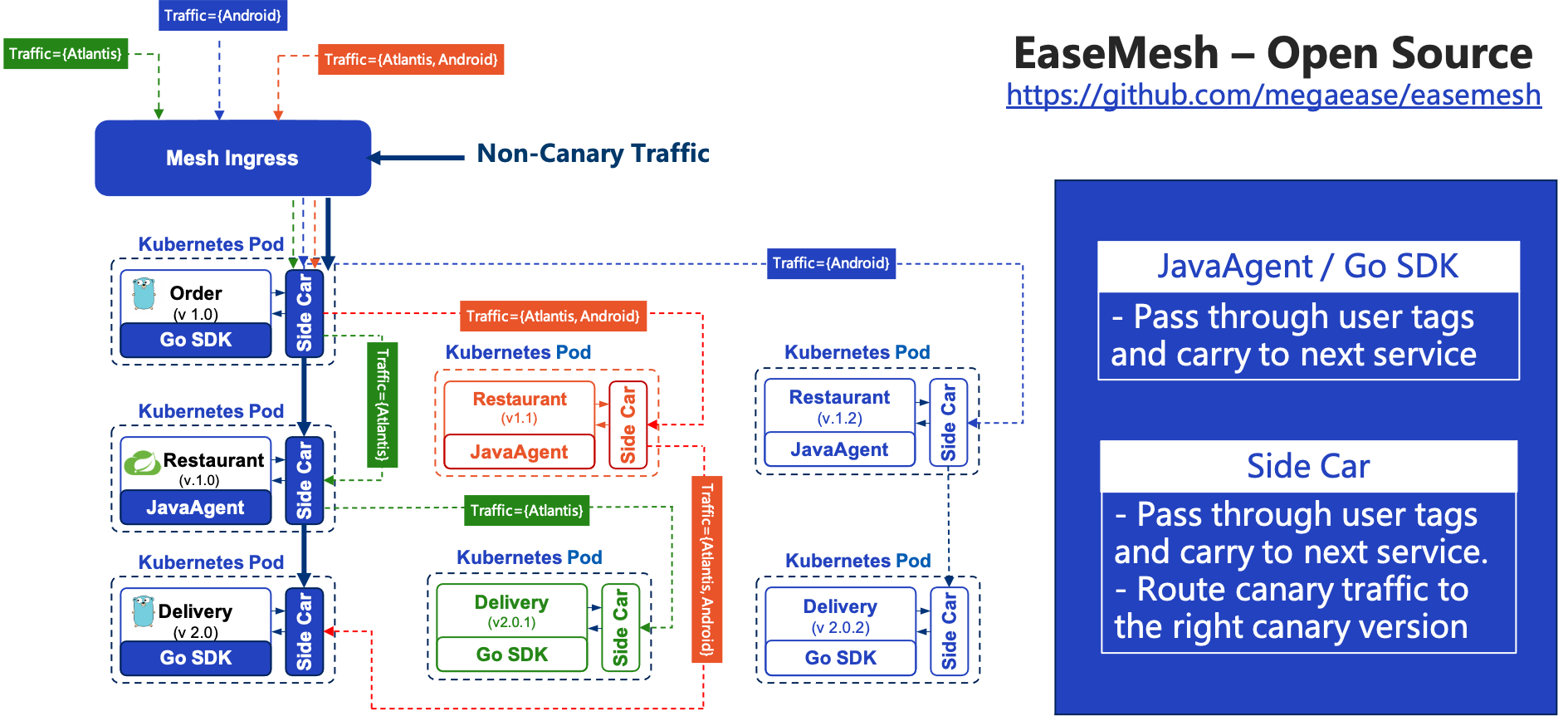

Implementing the whole full-stack multiple canary releases is not easy, and we’ve done this in our open source project - EaseMesh

Here, let’s explain the technical details of EaseMesh and give an overview of how we implement multiple canary releases.

First of all, all our services are running in Kubernetes Pods. The three services in here correspond to three services in EaseMesh, and even different versions under the same service are part of one service.

So a mesh service will have multiple versions running at the same time. To route the traffic to correct the canary services, two things need to be accomplished.

The first thing to ensure is to pass through User Tags, without losing any user information throughout the service chain.

This involves the sidecar and the business application. The sidecar naturally knows all the canary traffic rules and user tags, such as some specific HTTP Headers, and it will forward them with the traffic.

Also, the business application itself needs to pass through the User Tags, which can be done by our officially supported JavaAgent in cooperation with sidecar and does not require user awareness( Both EaseAgent and Easegress are already open-source software). Sidecar will notify the JavaAgent to pass through all the information. As for other languages such as Golang, since there is no bytecode technology, only a simple SDK is enough to forward the User Tags.

So EaseMesh also supports multiple languages in this advanced feature, as long as the user tags are available.

The second requirement for EaseMesh Canary releases is Traffic routing.

All components, including IngressController of EaseMesh, and the sidecar in each service Pod can route canary traffic to the next service’s corresponding canary version. You can see that all the service components in this figure, whether receiving requests or sending requests, will pass through the sidecar. And when sending requests outbound, the sidecar observes the traffic characteristics and decides whether the traffic needs to be dispatched to one of the canary versions of the next service. And this is all done by sidecar, without the involvement of the agent and SDK.

7. Summary

Let’s now summarize the design principles of the platform and the best practices for its operation.

7.1 Design Principles

- One canary service version can belong to at most one canary release.

- One request only can be scheduled to at most one canary release.

- The canary release must be explicitly selected by incoming traffic.

- Normal traffic that does not match canary rules goes through primary deployments.

7.2 Best Practices

- Tagging the traffic must use the user-side information. For example, a client IP address is not a good way.

- When tagged traffic overlaps, use explicit priority to guide the traffic router.

- The smaller scope canary rule has a higher priory.